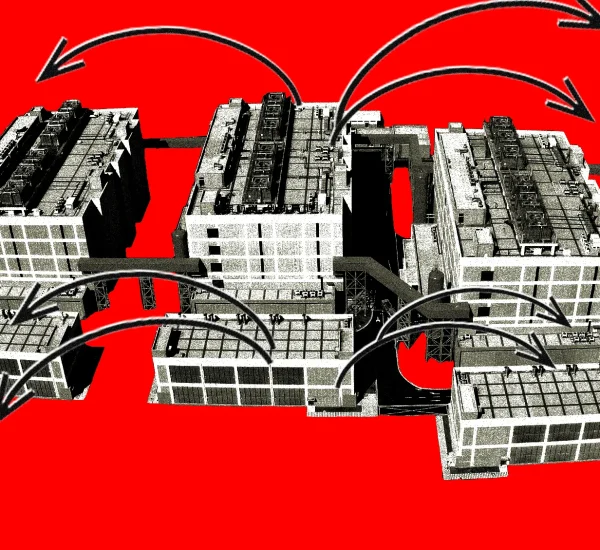

A platform can lose user trust long before it notices a breach, a spam wave, or a fake account network. The damage often starts quietly: odd login patterns, strange posting bursts, scraped listings, fake reviews, or checkout abuse that looks harmless until it spreads. For American businesses that depend on digital traffic, automated activity reviews are no longer a security add-on. They are part of keeping the front door steady while real customers move through it. Stronger review systems help teams spot patterns that human moderators would miss at scale, especially when bad actors try to hide inside normal-looking behavior. A marketplace in Texas, a fintech app in New York, or a subscription brand in California may face different risks, but the pressure feels the same. Users expect fast access, fair treatment, and protection from abuse. Brands working to improve visibility through trusted digital growth resources like online platform credibility support also need to protect the traffic and engagement they earn.

Better Reviews Protect the Business Before Abuse Becomes Obvious

Most platform abuse does not arrive wearing a sign. It blends into ordinary activity until the pattern becomes too large to ignore. That is why smarter review systems matter: they catch weak signals early, before fraud, spam, scraping, or fake engagement turns into a public problem.

How bot traffic review catches patterns humans miss

A human moderator can notice one strange account. A bot traffic review system can notice 4,000 accounts behaving almost the same way across different states, devices, and time windows. That difference changes everything for a U.S. platform handling large user volume.

The strongest systems do not judge activity from one action alone. They compare timing, session depth, device signals, repeated paths, failed attempts, and behavior after login. A user who forgets a password twice is normal. A group of accounts failing logins from rotating locations, then hitting the same checkout path, deserves attention.

Good bot traffic review also protects honest users from blanket restrictions. Bad systems block too much and punish loyal customers. Better systems separate clumsy human behavior from coordinated abuse, which keeps security firm without making the site feel hostile.

Why platform security checks need business context

Security tools fail when they treat every platform the same. A ticketing site, a banking app, and a local news comment section do not share the same risk profile. Platform security checks should reflect how the business works, what users expect, and where attackers gain value.

An online retailer may care most about account takeovers, discount abuse, and inventory scraping. A social platform may need to detect coordinated spam, impersonation, and fake engagement. A healthcare portal in the United States has a different burden because privacy expectations are higher and mistakes carry deeper consequences.

Context turns raw signals into better decisions. A sudden spike in page views may be a threat for one site and a successful campaign for another. The review system needs to understand that difference, or it becomes noise with a dashboard attached.

Strong Review Systems Keep Trust From Turning Fragile

Trust on a platform feels solid until one bad experience cracks it. A fake listing, stolen account, spammed inbox, or suspicious review can make users question the whole environment. Better review systems protect the quiet confidence that keeps people coming back.

Why user trust signals matter more than vanity metrics

Traffic numbers can flatter a business while hiding rot underneath. A platform may celebrate rising signups while fake accounts flood the system. User trust signals tell a more honest story because they reflect whether real people feel safe enough to stay, buy, post, or return.

These signals include complaint rates, suspicious account reports, chargeback patterns, failed login clusters, review quality, session behavior, and post-action feedback. They are not glamorous. They are useful because they show the health of the space behind the numbers.

American consumers have grown more alert to scams, fake reviews, and account abuse. They may not know what happens behind the screen, but they notice when something feels off. Once that feeling appears, marketing has to work twice as hard to win back basic confidence.

How automated abuse detection supports fair access

Security can become unfair when it relies on blunt rules. Automated abuse detection should help platforms reduce harm without treating every unusual user like a threat. That balance matters for users who travel, share devices, use privacy tools, or have inconsistent access patterns.

A college student logging in from campus Wi-Fi and home Wi-Fi should not be punished for ordinary movement. A small business owner checking orders from different devices should not be locked out because the system lacks patience. Better systems look for clusters of risk instead of grabbing one signal and overreacting.

Fair access also matters legally and commercially. If a platform blocks too many legitimate users, it loses revenue and invites complaints. If it allows abuse to spread, it loses trust. The smarter path sits between those mistakes, and it depends on review systems that can read behavior with care.

Better Reviews Help Teams Act Faster Without Overreacting

Speed matters in platform security, but panic creates bad decisions. A strong review process gives teams enough confidence to act quickly while leaving room for human judgment where the risk is unclear. That is where mature platforms separate themselves from reactive ones.

What a review workflow should do after a flag

A flag is not a verdict. It is a signal that something deserves attention. The review workflow should decide what happens next: allow, challenge, limit, queue, suspend, or escalate to a human specialist.

For example, a U.S. payments platform may see an account adding several new cards, changing shipping details, and attempting high-value purchases within minutes. The right response may not be an instant ban. A step-up verification, temporary hold, or manual check may stop fraud while preserving a legitimate customer relationship.

The key is proportional response. Low-risk oddities need light friction. High-risk patterns need fast containment. Unclear cases need review trails so teams can learn from outcomes instead of repeating the same uncertain call every week.

Why human reviewers still matter in edge cases

Machines are strong at pattern recognition, but people understand context, intent, and tone in ways systems still miss. A human reviewer can see when a heated comment is not coordinated abuse, when a business account has a real operational reason for unusual activity, or when a spike comes from a local news event.

This does not mean every flag needs a person. That would bury the team. Human review belongs where the cost of a wrong call is high: account closures, identity concerns, payment holds, marketplace seller suspensions, or content decisions that affect public speech.

The best platforms treat reviewers as decision-makers, not cleanup crews. They give them clear evidence, risk scores, action history, and policy guidance. That respect improves accuracy, morale, and the platform’s ability to learn from messy cases.

Review Quality Becomes a Competitive Advantage

Security work often hides in the background, but users feel its quality every time they log in, buy something, post content, or recover an account. A platform with better review habits feels calmer. That calm becomes a market advantage, especially in crowded U.S. sectors where trust influences every click.

How better internal data improves every decision

Poor data makes smart people guess. Better internal data gives teams a shared view of what is happening across accounts, devices, content, payments, and support tickets. That shared view turns scattered incidents into a readable pattern.

A marketplace might discover that fake sellers often upload similar product images, use new bank details, and respond to buyers with repeated message structures. A media platform may find that spam rings post during narrow time windows and target the same local topics. These patterns become more visible when review data is clean and connected.

Better data also helps teams stop fighting yesterday’s battle. Attackers change tactics once old tricks stop working. Review systems need feedback loops from support, fraud, moderation, engineering, and policy teams so detection keeps moving with the threat.

Why stronger reviews support growth instead of slowing it

Some leaders fear security friction because they think it hurts conversion. Bad friction does. Smart friction protects growth by keeping the platform usable for real people and expensive for attackers.

A checkout challenge shown to every customer will hurt sales. A challenge shown only when behavior carries clear risk can save revenue, reduce chargebacks, and protect inventory. The same logic applies to account creation, posting, messaging, and password recovery.

Growth built on polluted activity does not last. Fake signups inflate dashboards, spam drives away users, and weak controls attract repeat abuse. Better review systems make growth cleaner, and clean growth is easier to defend when investors, partners, regulators, or customers ask hard questions.

Conclusion

Online platforms in the United States are under constant pressure to welcome real users while keeping bad actors out. That tension will not disappear as traffic grows, tools spread, and attackers become more patient. The platforms that win will not be the ones that block the most activity. They will be the ones that understand activity with the most discipline. Automated activity reviews give teams a way to see hidden patterns, respond with proportion, and protect the user experience before trust starts leaking away. Stronger review systems also force a healthier business habit: they make teams care about the quality of engagement, not only the size of it. That shift matters. A platform full of suspicious activity is not growing; it is drifting. The next step is clear: audit where your platform reviews behavior today, find the gaps between detection and action, and build a review process that protects real users without making them fight the system.

Frequently Asked Questions

What are automated activity reviews for online platforms?

They are systems that examine user behavior, account actions, traffic patterns, and risk signals to detect suspicious activity. They help platforms spot abuse faster than manual checks alone, especially when harmful behavior spreads across many accounts or sessions.

Why do online platforms need bot traffic review?

Bot traffic review helps platforms separate useful automation from harmful behavior such as scraping, spam, credential attacks, and fake engagement. Without it, teams may mistake artificial traffic for real growth and miss abuse until users start complaining.

How do platform security checks improve user safety?

Platform security checks reduce exposure to fake accounts, account takeovers, spam, payment abuse, and suspicious content behavior. They create safer digital spaces by flagging risky actions before they affect large groups of users.

What is automated abuse detection used for?

Automated abuse detection is used to find harmful patterns across accounts, devices, transactions, posts, messages, and login attempts. It helps teams respond faster while reserving human review for cases that need judgment.

How do user trust signals help platforms make better decisions?

User trust signals show whether people feel safe enough to keep using the platform. Complaints, suspicious reports, failed logins, chargebacks, and behavior changes can reveal deeper problems that traffic numbers alone may hide.

Can automated reviews accidentally block real users?

Poorly designed systems can block legitimate users, especially when they rely on one signal too heavily. Better systems use layered evidence, risk levels, and review paths so unusual behavior does not automatically become punishment.

Should human moderators still review automated flags?

Human reviewers should handle high-impact or unclear cases, such as account suspensions, payment holds, identity issues, or sensitive content decisions. Automation can sort and rank risk, but people still add judgment where context matters.

How can a business improve automated platform reviews?

Start by mapping the riskiest user actions, then connect data from login, payment, content, support, and account behavior. Review false positives often, refine response levels, and make sure security decisions protect users without adding needless friction.